where algorithms strive to uncover patterns and make predictions, evaluating a model’s effectiveness is paramount. Two metrics often encountered in this evaluation process are model accuracy and model performance. While both seem to gauge a model’s competency, they paint distinct pictures. Understanding these distinctions is crucial for selecting the most appropriate metric for your specific machine learning task.

Model Accuracy: A Single Score, a Limited View

Model accuracy, expressed as a percentage, reflects the proportion of predictions a model makes correctly. Imagine a simple machine learning model predicting whether an email is spam or not spam. Model accuracy calculates the percentage of emails the model correctly classifies as spam or not spam. While seemingly straightforward, accuracy has limitations:

- Imbalanced Datasets: In datasets where one class significantly outnumbers the others (e.g., mostly non-spam emails), a model can achieve high accuracy simply by predicting the majority class most of the time. This doesn’t necessarily translate to good performance for the minority class (e.g., actual spam emails).

- Cost-Sensitive Learning: Not all errors are created equal. In some scenarios, misclassifying certain data points can have a higher cost than others. For instance, in a medical diagnosis system, a false negative (failing to detect a disease) could have more severe consequences than a false positive (mistakenly indicating a disease). Model accuracy doesn’t inherently account for these varying costs.

However, accuracy remains a valuable metric, particularly in situations where:

- The dataset is balanced: When the distribution of classes is relatively even, accuracy provides a good general sense of the model’s overall performance.

- The cost of errors is uniform: If misclassifying any data point carries a similar weight, accuracy offers a clear indicator of the model’s effectiveness.

Advantages of Model Accuracy:

- Easy to Understand: Accuracy is a readily grasped concept, making it a good starting point for gauging a model’s overall effectiveness, especially for simple classification tasks.

- Common Metric: Accuracy is a widely use metric, allowing for easy comparison of different models on the same task.

Limitations of Model Accuracy:

- Oversimplification of Reality: The real world is often messy. Solely relying on accuracy can be misleading, particularly in scenarios with imbalanced datasets. For instance, if a spam filter has an accuracy of 99%, but misses 1% of spam emails, the consequences could be significant depending on the nature of the spam.

- Doesn’t Consider Cost or Severity of Errors: Not all errors are create equal. Accuracy doesn’t account for the varying costs or severity of misclassifications. In some cases, a false positive (incorrectly classifying something as positive) might be less detrimental than a false negative (missing a true positive).

Model Performance: A Broader Perspective

Model performance encompasses a wider range of factors that contribute to a model’s effectiveness beyond just the raw number of correct predictions. Here’s how it expands upon the concept of accuracy:

- Metrics Beyond Accuracy: Performance can incorporate additional metrics depending on the task. For classification problems, metrics like precision (identifying true positives effectively), recall (correctly capturing all relevant data points), and F1-score (a balance between precision and recall) can provide a more nuanced picture. In regression problems, metrics like mean squared error or R-squared can be employed.

- Cost-Sensitivity Integration: Performance metrics can be tailored to account for varying costs of errors. This is particularly valuable in scenarios where misclassification can have significant consequences.

By considering model performance, you gain a more comprehensive understanding of a model’s strengths and weaknesses. This empowers you to:

- Identify Areas for Improvement: Performance metrics can highlight specific areas where the model struggles, guiding further development and refinement.

- Compare Models: When evaluating multiple models for a task, performance metrics provide a more holistic comparison, enabling you to select the model that generalizes best to unseen data.

Key Parameter Of Model Performance

Here are some key aspects that contribute to model performance:

- Accuracy: Undoubtedly, accuracy remains a crucial component of model performance.

- Precision and Recall: These metrics delve deeper into classification tasks, assessing how well the model identifies true positives and avoids false positives/negatives.

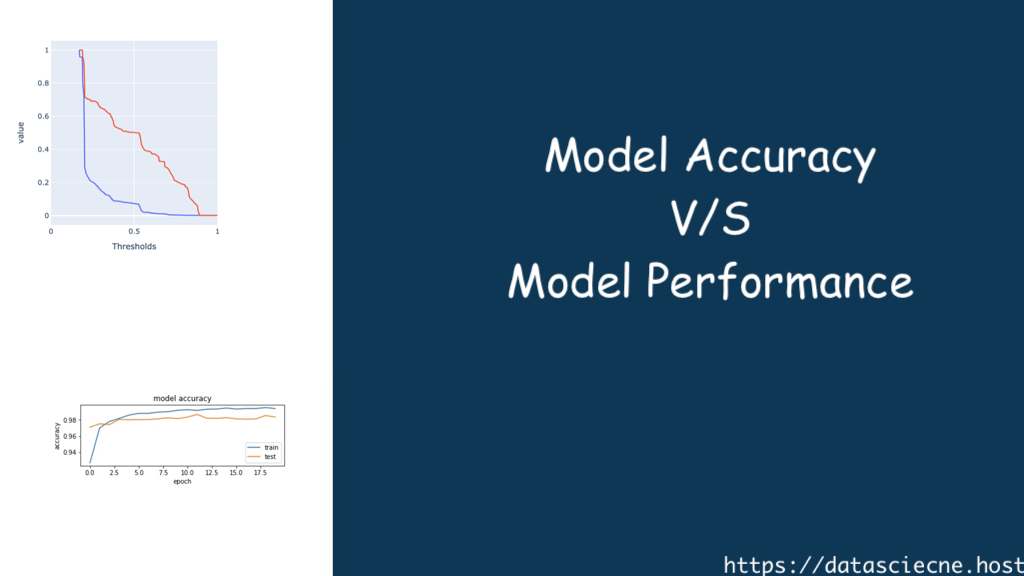

- AUC-ROC Curve (for classification): This curve visualizes the model’s ability to distinguish between positive and negative classes.

- R-squared (for regression): This metric measures the proportion of variance in the target variable explained by the model.

- Loss Function: The loss function quantifies the difference between the model’s predictions and the actual values. Minimizing the loss function is crucial for model training.

- Generalizability: A model’s ability to perform well on unseen data, not just the data it was trained on, is a critical aspect of performance.

- Computational Efficiency: The time and resources required to train and use a model also factor into its overall performance.

Choosing the Right Metric: A Balancing Act

The optimal metric for evaluating your model hinges on the specific characteristics of your machine learning task. Here are some key considerations:

- The Nature of the Problem: Classification, regression, or other types of machine learning tasks will have their own relevant performance metrics.

- Dataset Characteristics: The balance of classes within the dataset and the potential costs associate with misclassification errors should be factor in.

- Project Goals: The ultimate objective of your project can influence the choice of metrics. For instance, if interpretability is crucial, simpler metrics like accuracy might be more suitable.

In many cases, relying solely on a single metric (accuracy or a single performance metric) might not paint the entire picture. A well-rounded approach often involves considering a combination of metrics to gain a comprehensive understanding of a model’s performance.

How Approach to Model Evaluation

While metrics like accuracy and performance offer valuable insights, they shouldn’t be the sole criteria for evaluating your model. Here are some Model Evaluation additional considerations:

- Interpretability: Understanding the factors influencing the model’s predictions can be crucial for building trust and ensuring ethical use of the model.

- Overfitting: A model with high accuracy on training data but poor performance on unseen data might be suffering from overfitting. Techniques like regularization and validation sets help mitigate this issue.

- Real-World Performance: Ultimately, the true test of a model lies in its ability to perform effectively in the real world. Monitor the model’s performance in production and adapt it as needed.

Conclusion: A Symphony of Metrics, Not a Solo Performance

Model accuracy and model performance are not rivals, but rather complementary concepts. Understanding their limitations and strengths empowers you to select the most appropriate metrics for evaluating your machine learning models. By employing a balanced approach, you can gain valuable insights into your model’s effectiveness and make informed decisions that propel your project towards success. Remember, in the intricate world of machine learning, a thorough evaluation through a combination of metrics is the key to unlocking the true potential of your models.